Beat the Streak, Day 12: My Grand Vision

Dear blog reader and beat-the-streak enthusiasts alike,

Today I want talk about my grand vision for beat-the-streak modelling. I will explain how I have always envisioned a solution for beat-the-streak, but never actually attempted to solve it this way due the complexity of the project and the limited time I have to work on it. I hope this could be the year that I start chipping away at putting this grand vision in practice. In this blog post, I want to explicitly write out this vision along with the sub-problems that would need to be solved to execute this vision. I have hinted at this vision in some earlier blog posts, but now I want to dive a little deeper on exactly what this idea would entail.

In short, this vision requires modeling probability distributions at the finest level of granularity (pitches) and using those as building blocks for coarser granularity models (atbats and game). The specific models that I am proposing to train / develop are listed below. Among these, there are three standard probabilistic classification models, two continuous distribution fitting models, and three known models (which could be lightly parameterized/learned). I have looked at some of these sub-problems in the past, but not all of them, and have not yet put them together into a unified grand model.

Previously, my approach was to directly learn a model at the coarsest level of granularity (games), which is ultimately what we care about for beat-the-streak. However, I have several reasons to believe it would be helpful to draw on finer granularity data, both to get more signal and richer features. One benefit of this approach is any improvements to any sub-model should ultimately be reflected in the final coarse-grained model. In the sections below, I'll will talk briefly about each sub-model, and a natural baseline to consider (and hopefully improve over significant).

Pitch Level Granularity

[Probabilistic Classification] P(Hit | launch angle, spray angle, exit velocity, context)

[Continuous Distribution Fitting] P(launch angle, spray angle, exit velocity | in play=True, x, y, type, speed, nastiness, context)

For the second model, we want to fit a distribution for launch angle, spray angle, and exit velocity, given that the ball was put in play as a function of the pitch characteristics and other context (batter, etc.) A natural baseline model would ignore the context and just fit a model to P(launch angle, spray angle, exit velocity | in play = True). A natural model class to consider could be a mixture of Gaussians or a kernel density estimator. This sub-problem will be explored in greater depth in a future blog post.

[Probabilistic Classification] P(outcome | x, y, type, speed, nastiness, context)

At Bat and Game Level Granularity

[Known Model, Learned Generalized Negative Binomial ] P(team plate appearances | context) - depends on previous model

For the seventh model, we need to estimate to number of plate appearances that a team will get in a given game from the relevant context. There are multiple ways we could approach this problem. A natural option is to take the atbat-level model, combined with the known lineup to exactly calculate this the distribution of plate appearances via the negative binomial distribution. This is something I scratched the surface on in Beat the Streak: Day 8, but definitely needs revisiting in a future post.

[Known Model, Exact Formula] P(hit in game | context) - depends on both models above

Next Steps

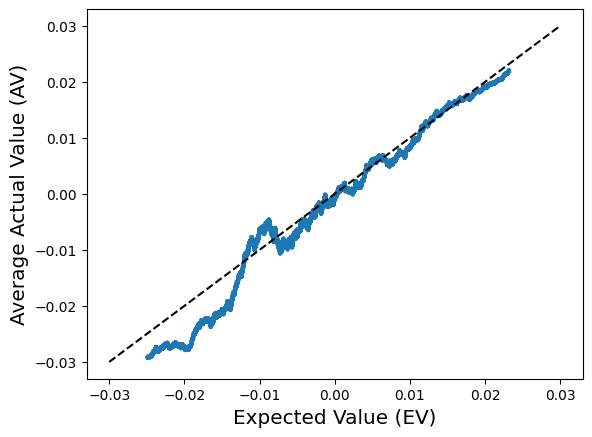

- Develop a robust evaluation framework

- Implement baselines for each sub-problem and have a leader board of sorts.

- Tackle each sub-problem one at a time.

Comments

Post a Comment