Challenge Problem: Taking a Quiz

I thought of this problem about two years ago and was going to send it out as the one of the weekly challenge problems for the ACM student chapter at UD, however I was unable to solve it when I first thought of it, and so I put it on the back-burner for a while. Recently, I revisited it and made some progress on it, but was still unable to find a strategy that can solve it at all, let alone efficiently. I am posting the problem to this blog in the hopes that you can help me figure it out by posting your ideas and code in the comments below. Anyway, here is the problem:

I'm confident there are many better strategies out there, and have thought of a couple myself, although I want to see what you can come up with. I'd be very impressed if you can come up with a strategy that solves it exactly (guaranteed optimality) and in reasonable amount of time even for large $n$. I'd also be interested in seeing other strategies you guys come up with, even if they aren't provably optimal.

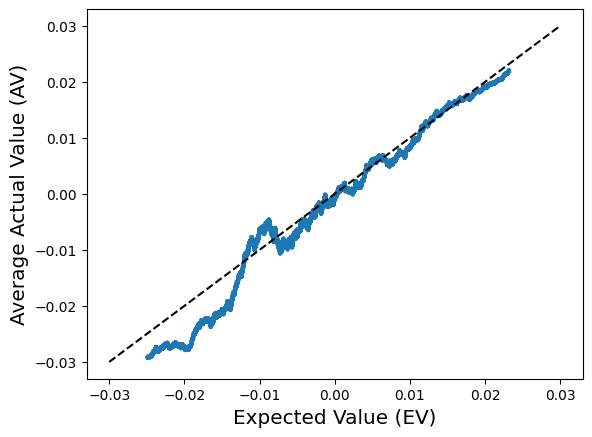

To make it easy for you to create and test strategies, I coded up a small python script that simulates any test taking strategy for 10,000 20-question quizzes. While it's fairly easy to exactly compute the expected score for a specific strategy (especially for the simple one I defined earlier), it's significantly harder to compute the expected score exactly for an arbitrary strategy. That is why I am relying on simulations to approximate this expected value. After 10,000 trials, the average should be close enough to the true expected value that we can trust it.

The top half of the code are the internals of the quiz and quiz-grading mechanism. It's not super important what's happening there, because you won't need to mess with that code, although it's pretty easy to understand if you want to. The second half of the code is where you should write your quiz-taking strategies. In order to do that, you will need to define a function with parameters: the number of questions on the quiz, and a grading function. The grading function consumes a numpy boolean array of length questions, and produces a pair containing the number of questions you got correct and your score (# correct - # retakes). The grading function keeps track of how many times you call it, so make sure you don't call it unless you want to submit your answers for grading. Once you are satisfied with your score, stop calling grading and terminate the function. Now just add your new function to the list of students, and everything else is handled for you. Note that I set up the code so that it would be impossible to cheat (i.e., look at the answer) without modifying the first half of the code.

You are taking a quiz with $n$ true/false questions that you didn't study for, but you are allowed to retake the quiz as many times as you want. Every time you take the quiz, you are told how many questions you got correct, but not which questions were right and wrong. Your final score is the number of answers you got correct on your last submission minus the number of times you retook the quiz. Create a program that will maximize your expected score on this quiz.I'm going to quickly clarify some possible ambiguities:

- The quiz doesn't change between submissions.

- Every question is equally likely to be true/false.

- You believe all $2^n$ possible solutions are equally likely (because you didn't study).

- You cannot score below 0.

I'm confident there are many better strategies out there, and have thought of a couple myself, although I want to see what you can come up with. I'd be very impressed if you can come up with a strategy that solves it exactly (guaranteed optimality) and in reasonable amount of time even for large $n$. I'd also be interested in seeing other strategies you guys come up with, even if they aren't provably optimal.

To make it easy for you to create and test strategies, I coded up a small python script that simulates any test taking strategy for 10,000 20-question quizzes. While it's fairly easy to exactly compute the expected score for a specific strategy (especially for the simple one I defined earlier), it's significantly harder to compute the expected score exactly for an arbitrary strategy. That is why I am relying on simulations to approximate this expected value. After 10,000 trials, the average should be close enough to the true expected value that we can trust it.

The top half of the code are the internals of the quiz and quiz-grading mechanism. It's not super important what's happening there, because you won't need to mess with that code, although it's pretty easy to understand if you want to. The second half of the code is where you should write your quiz-taking strategies. In order to do that, you will need to define a function with parameters: the number of questions on the quiz, and a grading function. The grading function consumes a numpy boolean array of length questions, and produces a pair containing the number of questions you got correct and your score (# correct - # retakes). The grading function keeps track of how many times you call it, so make sure you don't call it unless you want to submit your answers for grading. Once you are satisfied with your score, stop calling grading and terminate the function. Now just add your new function to the list of students, and everything else is handled for you. Note that I set up the code so that it would be impossible to cheat (i.e., look at the answer) without modifying the first half of the code.

import numpy as np

#############################

## Do not modify this code ##

#############################

class Quiz:

def __init__(self, questions):

self.questions = questions

self.__answers = np.random.rand(questions) < 0.5

self.turns = 0

self.correct = 0

self.score = 0

def guess(self, guesses):

self.correct = (self.__answers == guesses).sum()

self.score = max(0, self.correct - self.turns)

self.turns += 1

return self.correct, self.score

def evaluate(quiz, quiz_taker):

quiz_taker(quiz.questions, quiz.guess)

return quiz.score

def simulate(quiz_taker, questions, trials):

return np.mean([evaluate(Quiz(questions), quiz_taker) for _ in range(trials)])

###########################################

## Write your quiz-taking functions here ##

###########################################

## Add more functions like this

def stub_student(questions, grader):

guess = np.random.rand(questions) < 0.5 # guess the answers

score, correct = grader(guess) # grade the answers

# do nothing else - accept your current score

# Make random guess

# If at least 50% are correct, accept the score.

# Otherwise, invert every answer and guess again.

def two_guess(questions, grader):

guess = np.random.rand(questions) < 0.5

correct, score = grader(guess)

if score*2 < questions:

correct, score = grader(np.invert(guess))

students = [stub_student, two_guess]

for student in students:

print('%s: %0.4f' % (student.__name__, simulate(student, 20, 10000)))

Let $f_s(n)$ be the expected score for a quiz with $n$ questions under strategy $s$. Does there exist a strategy $s$ such that $ \lim_{n \rightarrow \infty} \frac{f_s(n)}{n} > \frac{1}{2} $? If so, prove it.If you come up with any good strategies, post them in the comments below!

Comments

Post a Comment